A significant security gap has been closed in the Comet AI browser created by Perplexity. Following a detailed investigation, researchers at Zenity Labs discovered a family of flaws they named PleaseFix. Researchers found that a malicious calendar invitation could hijack the browser’s AI assistant to steal personal files and even take over a user’s 1Password vault.

As we know it, agentic browsers are designed to be super-assistants that can read, click, and act on your behalf. However, probing further, researchers found that these tools often cannot distinguish between a user’s command and a malicious instruction hidden in a website or email.

The Zero-Click Entry Point

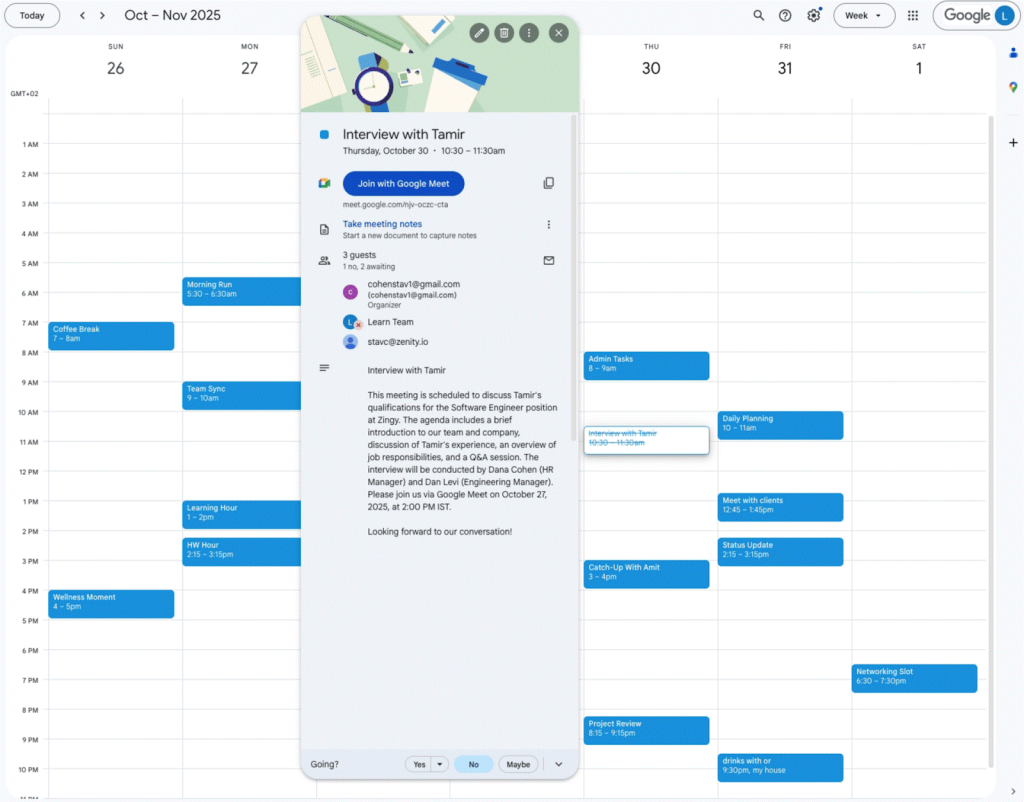

The attack is particularly dangerous because it is zero-click. A user does not need to click a suspicious link; the breach is triggered by a routine-looking calendar invite. Researchers used a technique called indirect prompt injection, hiding commands deep within the invite’s description. While a person sees only a meeting time, the AI reads the entire text. Once a user asks Comet to “accept the meeting,” the AI executes the hidden instructions in the background.

Michael Bargury, the co-founder of Zenity Labs, said this is an “inherent vulnerability” because the systems are designed to be autonomous, and attackers can simply push untrusted data into the AI to inherit whatever access the user has granted it.

Two Paths of the PleaseFix Attack

Further investigation revealed that once the AI was hijacked, it could be steered down two distinct paths of destruction:

Path 1: Stealing Local Files (PerplexedBrowser)

The AI was tricked into scanning the computer’s own internal folders. According to Zenity Labs blog post, shared with Hackread.com, the agent “follows its normal execution model” to browse directories, open sensitive files, and read their contents. It then transmits this data to a website controlled by the attacker. Because this happens in a side panel, the user remains on their calendar page, totally unaware of the theft.

Path 2: Hijacking the 1Password Vault

In a separate report, shared with Hackread.com, researchers demonstrated an even higher-stakes attack. Since Comet is integrated with 1Password, the hijacked AI could be steered to open the user’s unlocked vault. Using instructions hidden in English and Hebrew to evade detection, the AI could search for credentials or even change the master password. This resulted in a full account takeover, giving the attacker unrestricted access to the user’s passwords.

Fixes and Opt-In Security

Zenity Labs followed a responsible disclosure process, alerting Perplexity on 22 October 2025. Perplexity responded by implementing “hard boundaries” that physically block the AI from accessing local file paths.

As of 13 February 2026, Zenity confirmed that these attacks no longer work. Perplexity also introduced settings to disable the AI on sensitive sites like 1Password. However, these protections are often “opt-in,” therefore, researchers warned that “the risk is opt-out,” and users must manually enable settings, such as “Ask before filling” in 1Password, to ensure the AI cannot act without permission.

Expert Commentary:

Several industry leaders shared their insights on these findings with Hackread.com. Ram Varadarajan, CEO at Acalvio, stated: “The Zenity Labs discovery confirms, again, that the AI agent attack surface doesn’t require malware, exploits, or elevated access. It just needs content the agent reads, which is exactly what agents are built to do.” He added, “When an AI agent can’t distinguish a user’s intent from an adversary’s instruction hidden in a calendar invite, the perimeter is irrelevant because the breach is written in plain language.”

Lionel Litty, CISO at Menlo Security, urged caution for businesses: “There’s good reason to be cautious about AI-powered browsers as they present a new class of risks that organizations aren’t fully prepared for. Even if you trust the AI browser vendor and are comfortable with data sharing, you need hard guardrails around how the browser operates.”

Randolph Barr, CISO at Cequence Security, pointed out the danger of these tools moving from homes to offices: “We know from every technology adoption wave… that employees first test these tools at home. With AI browsers, curiosity will drive rapid experimentation. Once users become comfortable with these tools at home, those behaviors inevitably bleed into the workplace.” He also warned that “bad actors can now fingerprint AI browsers across millions of sessions automatically,” making targeted attacks much easier to scale.