Google says it is increasingly using its Gemini AI models to detect and block harmful ads on its advertising platforms, as scammers and threat actors continue to evolve their tactics to evade detection.

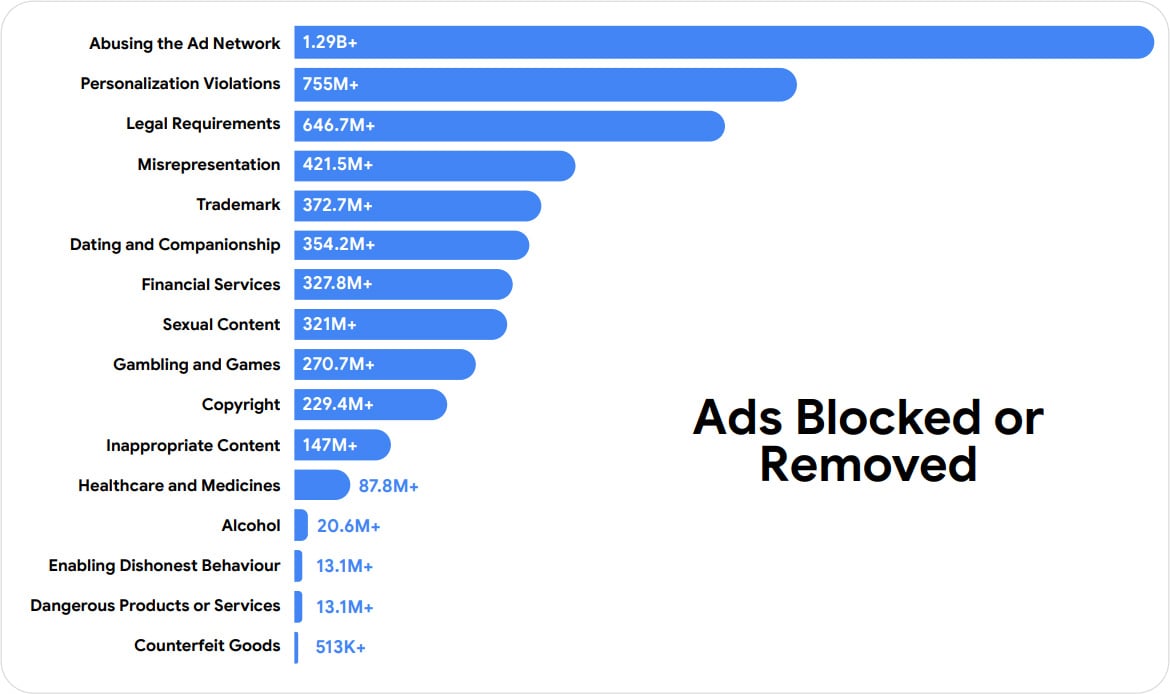

In a new post, the company reports having blocked or removed 8.3 billion ads and suspended 24.9 million advertiser accounts in 2025, including 602 million ads tied to scams.

Malvertising has been a long-standing problem on Google’s ad network, with attackers purchasing ads that impersonate legitimate brands and services that push malware, steal cryptocurrency, or lead to phishing sites.

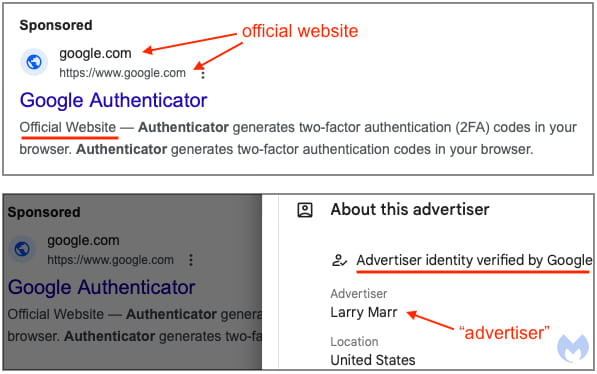

These advertising campaigns commonly utilize cloaking techniques and URL redirects to appear as trusted websites, including showing Google’s own domains and those of legitimate software download pages and authentication portals.

Recent campaigns reported by BleepingComputer include fake login pages to steal Google Ads accounts, distributing trojanized software through ads impersonating tools like Google Authenticator and Homebrew, and displaying ads for websites posing as cryptocurrency platforms that drain visitors’ cryptocurrency wallets.

Source: Malwarebytes

According to Google, cybercriminals are now using generative AI in these campaigns, enabling them to build more sophisticated, larger-scale operations rapidly.

“Bad actors are using generative AI to create deceptive ads at scale, and Gemini helps us detect and block them in real time. By the end of last year, the majority of Responsive Search Ads created in Google Ads were reviewed instantly, and harmful content was blocked at submission — a capability we plan to bring to more ad formats this year,” explains Keerat Sharma, VP & General Manager, Ads Privacy and Safety.

To defend against this, Google says it is now relying heavily on Gemini AI-powered systems to automate the discovery and blocking of malicious ads before they are shown to users.

While previous detection systems analyzed keywords for malicious behavior, Google says Gemini can analyze billions of signals, including advertiser behavior, account history, campaign patterns, and intent, to determine whether an ad is malicious.

In the United States, Google says it removed 1.7 billion ads and suspended 3.3 million advertiser accounts in 2025, with “abusing the ad network” and “misrepresentation” being the top two policy violations.

Artificial intelligence has enhanced Google’s response to malicious and scam ads that slip through the initial review process, allowing the company to process user reports much faster than in previous years.

Google has also said the increased accuracy of its AI models has reduced incorrect advertiser suspensions by 80%.

The company says it will continue expanding Gemini’s use across additional ad formats and enforcement systems, aiming to block malicious campaigns at submission time.

Automated Pentesting Covers Only 1 of 6 Surfaces.

Automated pentesting proves the path exists. BAS proves whether your controls stop it. Most teams run one without the other.

This whitepaper maps six validation surfaces, shows where coverage ends, and provides practitioners with three diagnostic questions for any tool evaluation.